Coding Neural Network Back-Propagation Using C# part 1

Vote. and the second part will be released. the source in the last part.

var example = true

Back-propagation is the most common algorithm used to train neural networks. There are many ways that back-propagation can be implemented. This article presents a code implementation, using C#, which closely mirrors the terminology and explanation of back-propagation given in the Wikipedia entry on the topic.

You can think of a neural network as a complex mathematical function that accepts numeric inputs and generates numeric outputs. The values of the outputs are determined by the input values, the number of so-called hidden processing nodes, the hidden and output layer activation functions, and a set of weights and bias values.

A fully connected neural network with m inputs, h hidden nodes, and n outputs has (m * h) + h + (h * n) + n weights and biases. For example, a neural network with 4 inputs, 5 hidden nodes, and 3 outputs has (4 * 5) + 5 + (5 * 3) + 3 = 43 weights and biases. Training a neural network is the process of finding values for the weights and biases so that, for a set of training data with known input and output values, the computed outputs of the network closely match the known outputs.

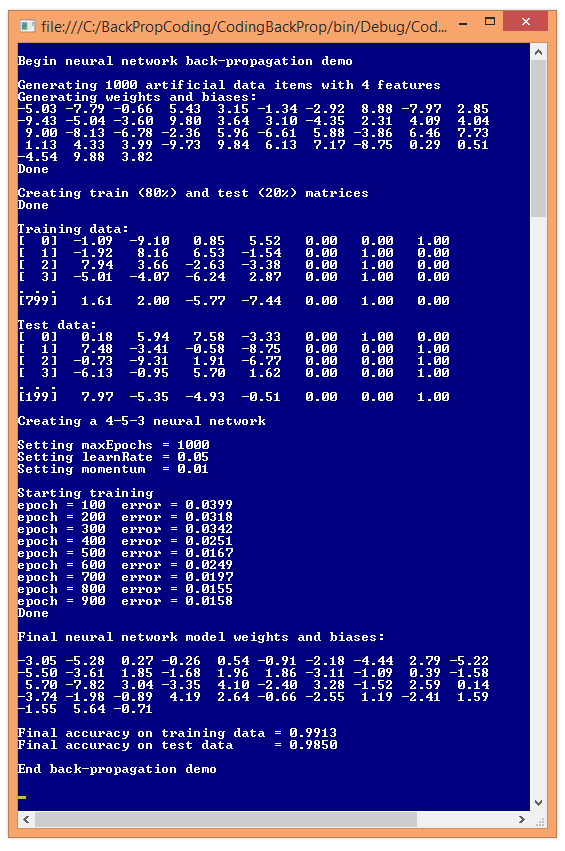

The best way to see where this article is headed is to examine the demo program in Figure 1. The demo program begins by generating 1,000 synthetic data items. Each data item has four input values and three output values. For example, one of the synthetic data items is:

Figure 1. Back-Propagation Training in Action

Figure 1. Back-Propagation Training in Action

-1.09 -9.10 0.85 5.52 0 0 1

The four input values are all between -10.0 and +10.0 and correspond to predictor values that have been normalized so that values below zero are smaller than average, and values above zero are greater than average. The three output values correspond to a variable to predict that can take on one of three categorical values. For example, you might want to predict the political leaning of a person: conservative, moderate or liberal. Using 1-of-N encoding, conservative is (1, 0, 0), moderate is (0, 1, 0), and liberal is (0, 0, 1). So, for the example data item, if the predictor variables are age, income, education, and debt, the data item represents a person who is younger than average, has much lower income than average, is somewhat more educated than average, and has higher debt than average. The person has a liberal political view.

After the 1,000 data items were generated, the demo program split the data randomly, into an 8,000-item training set and a 2,000-item test set. The training set is used to create the neural network model, and the test set is used to estimate the accuracy of the model.

After the data was split, the demo program instantiated a neural network with five hidden nodes. The number of hidden nodes is arbitrary and in realistic scenarios must be determined by trial and error. Next, the demo sets the values of the back-propagation parameters. The maximum number of training iterations, maxEpochs, was set to 1,000. The learning rate, learnRate, controls how fast training works and was set to 0.05. The momentum rate, momentum, is an optional parameter to increase the speed of training and was set to 0.01. Training parameter values must be determined by trial and error.

During training, the demo program calculated and printed the mean squared error, every 100 epochs. In general, the error decreased over time, but there were a few jumps in error. This is typical behavior when using back-propagation. When training finished, the demo displayed the values of the 43 weights and biases found. These values, along with the number of hidden nodes, essentially define the neural network model.

The demo concluded by using the weights and bias values to calculate the predictive accuracy of the model on the training data (99.13 percent, or 7,930 correct out of 8,000) and on the test data (98.50 percent, or 1,970 correct out of 2,000). The demo didn't use the resulting model to make a prediction. For example, if the model is fed input values (1.0, 2.0, 3.0, 4.0), the predicted output is (0, 1, 0), which corresponds to a political moderate.

This article assumes you have at least intermediate level developer skills and a basic understanding of neural networks but does not assume you are an expert using the back-propagation algorithm. The demo program is too long to present in its entirety here, but complete source code is available in the download that accompanies this article. All normal error checking has been removed to keep the main ideas as clear as possible.

The Demo Program

To create the demo program, I launched Visual Studio, selected the C# console application program template, and named the project CodingBackProp. The demo has no significant Microsoft .NET Framework version dependencies, so any relatively recent version of Visual Studio should work. After the template code loaded, in the Solution Explorer window I renamed file Program.cs to BackPropProgram.cs and Visual Studio automatically renamed class Program for me.

The overall structure of the demo program is presented in Listing 1. I removed unneeded using statements that were generated by the Visual Studio console application template, leaving just the one reference to the top-level System namespace.

Listing 1: Demo Program Structure

using System;

namespace CodingBackProp

{

class BackPropProgram

{

static void Main(string[] args)

{

Console.WriteLine("Begin back-propagation demo");

...

Console.WriteLine("End back-propagation demo");

Console.ReadLine();

}

public static void ShowMatrix(double[][] matrix,

int numRows, int decimals, bool indices) { . . }

public static void ShowVector(double[] vector,

int decimals, int lineLen, bool newLine) { . . }

static double[][] MakeAllData(int numInput,

int numHidden, int numOutput, int numRows,

int seed) { . . }

static void SplitTrainTest(double[][] allData,

double trainPct, int seed, out double[][] trainData,

out double[][] testData) { . . }

} // Program

public class NeuralNetwork

{

private int numInput;

private int numHidden;

private int numOutput;

private double[] inputs;

private double[][] ihWeights;

private double[] hBiases;

private double[] hOutputs;

private double[][] hoWeights;

private double[] oBiases;

private double[] outputs;

private Random rnd;

public NeuralNetwork(int numInput, int numHidden,

int numOutput) { . . }

private static double[][] MakeMatrix(int rows,

int cols, double v) { . . }

private void InitializeWeights() { . . }

public void SetWeights(double[] weights) { . . }

public double[] GetWeights() { . . }

public double[] ComputeOutputs(double[] xValues) { . . }

private static double HyperTan(double x) { . . }

private static double[] Softmax(double[] oSums) { . . }

public double[] Train(double[][] trainData,

int maxEpochs, double learnRate,

double momentum) { . . }

private void Shuffle(int[] sequence) { . . }

private double Error(double[][] trainData) { . . }

public double Accuracy(double[][] testData) { . . }

private static int MaxIndex(double[] vector) { . . }

} // NeuralNetwork

} // ns

All the control logic is in the Main method and all the classification logic is in a program-defined NeuralNetwork class. Helper method MakeAllData generates a synthetic data set. Method SplitTrainTest splits the synthetic data into training and test sets. Methods ShowData and ShowVector are used to display training and test data, and neural network weights.

The Main method (with a few minor edits to save space) begins by preparing to create the synthetic data:

static void Main(string[] args)

{

Console.WriteLine("Begin back-propagation demo");

int numInput = 4; // number features

int numHidden = 5;

int numOutput = 3; // number of classes for Y

int numRows = 1000;

int seed = 1; // gives nice demo

Next, the synthetic data is created:

Console.WriteLine("\nGenerating " + numRows +

" artificial data items with " + numInput + " features");

double[][] allData = MakeAllData(numInput, numHidden,

numOutput, numRows, seed);

Console.WriteLine("Done");

To create the 1,000-item synthetic data set, helper method MakeAllData creates a local neural network with random weights and bias values. Then, random input values are generated, the output is computed by the local neural network using the random weights and bias values, and then output is converted to 1-of-N format.

Next, the demo program splits the synthetic data into training and test sets using these statements:

Console.WriteLine("Creating train and test matrices");

double[][] trainData;

double[][] testData;

SplitTrainTest(allData, 0.80, seed,

out trainData, out testData);

Console.WriteLine("Done");

Console.WriteLine("Training data:");

ShowMatrix(trainData, 4, 2, true);

Console.WriteLine("Test data:");

ShowMatrix(testData, 4, 2, true);

Next, the neural network is instantiated like so:

Console.WriteLine("Creating a " + numInput + "-" +

numHidden + "-" + numOutput + " neural network");

NeuralNetwork nn = new NeuralNetwork(numInput,

numHidden, numOutput);

The neural network has four inputs (one for each feature) and three outputs (because the Y variable can be one of three categorical values). The choice of five hidden processing units for the neural network is the same as the number of hidden units used to generate the synthetic data, but finding a good number of hidden units in a realistic scenario requires trial and error. Next, the back-propagation parameter values are assigned with these statements:

int maxEpochs = 1000;

double learnRate = 0.05;

double momentum = 0.01;

Console.WriteLine("Setting maxEpochs = " +

maxEpochs);

Console.WriteLine("Setting learnRate = " +

learnRate.ToString("F2"));

Console.WriteLine("Setting momentum = " +

momentum.ToString("F2")

Determining when to stop neural network training is a difficult problem. Here, using 1,000 iterations was arbitrary. The learning rate controls how much each weight and bias value can change in each update step. Larger values increase the speed of training at the risk of overshooting optimal weight values. The momentum rate helps prevent training from getting stuck with local, non-optimal weight values and also prevents oscillation where training never converges to stable values.

Thorough post, though some advice, try putting all the code into markdown code using " ``` " Also, if you copied any code or snippets, try crediting the original coder or using referencing accordingly.

for example:

Thaks. It's my first post.

Hi! I am a robot. I just upvoted you! I found similar content that readers might be interested in:

https://visualstudiomagazine.com/articles/2015/04/01/back-propagation-using-c.aspx

Thank