Awan LLM - Cost effective LLM inference API for startups & developers

Awan LLM

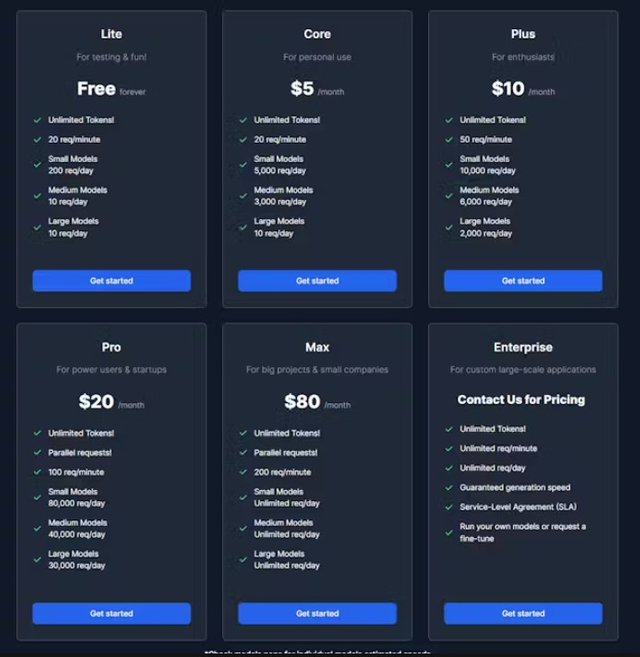

Cost effective LLM inference API for startups & developers

Screenshots

Hunter's comment

A cloud provider for LLM inference which focuses on cost & reliability. Unlike other providers, we don't charge per token which results in ballooning costs. Instead, we charge monthly. We achieve this by hosting our data center in strategic cities.

Link

This is posted on Steemhunt - A place where you can dig products and earn STEEM.

View on Steemhunt.com

Congratulations!

We have upvoted your post for your contribution within our community.

Thanks again and look forward to seeing your next hunt!

Want to chat? Join us on: